The 41st Annual Congress of the European Association of Urology (EAU26) was held in London, UK, from March 13–16, 2026, serving as one of the most important global academic platforms in urology. This year’s congress introduced a special session titled “EAU Lab,” designed to highlight groundbreaking advances in basic and translational research in urology.

A study from the team led by Prof. Jianming Guo at the Department of Urology, Zhongshan Hospital, Fudan University, was selected for presentation in this session (Abstract No. A0454). The study introduced a novel vision–language foundation model for renal cancer, RenalCLIP, which stood out due to its innovative modeling approach and strong potential for clinical translation. The work was presented at the conference by Dr. Ying Xiong, who shared the research findings with the international academic community.

Addressing Two Major Challenges in Renal Cancer Management

Renal cancer diagnosis and treatment have long faced two major challenges:

- Diagnostic difficulty – particularly for small renal masses, whose imaging characteristics are often non-specific, making it difficult to distinguish benign from malignant lesions.

- Decision-making complexity – clinicians must not only determine whether a lesion is malignant but also estimate tumor aggressiveness and prognostic risk in advance in order to choose among surgical treatment, active surveillance, or follow-up management.

Artificial intelligence offers new opportunities to address these challenges. However, most existing models focus on single-task modeling and rely primarily on imaging data alone, without integrating the semantic information contained in radiology reports and clinical context.

More importantly, when applied to different centers, imaging equipment, or patient populations, many AI models show significant declines in performance, highlighting the problem of poor generalizability, which has limited real-world clinical implementation.

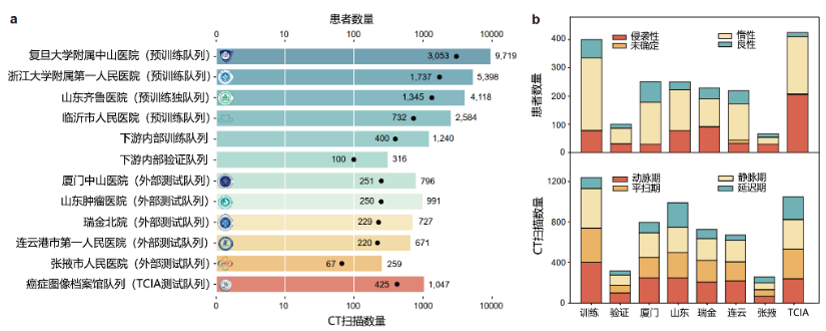

Building the World’s Largest Dedicated Renal Cancer Dataset

To address these limitations, Dr. Ying Xiong, under the guidance of Prof. Jianming Guo, led a multicenter collaborative study involving several institutions, including:

- Zhongshan Hospital, Fudan University

- Qilu Hospital, Shandong University

- The First Affiliated Hospital, Zhejiang University School of Medicine

- Linyi People’s Hospital

The research team also collaborated with Fudan University’s Digital Medicine Research Center and Microsoft Research Asia.

The study ultimately included:

- 8,809 patients

- 27,866 preoperative CT scans

These data spanned multiple medical centers, imaging phases, and pathological subtypes, forming the largest disease-specific dataset for renal cancer research worldwide.

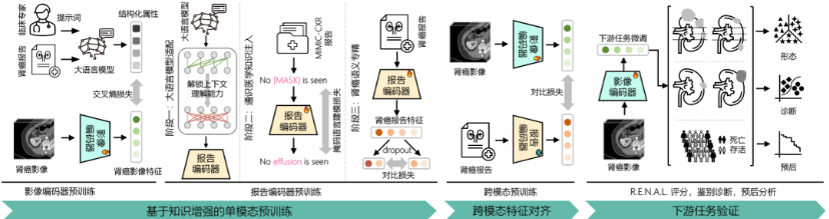

A New Modeling Strategy: Teaching AI to “Read Images” and “Understand Reports”

Instead of allowing AI to simply memorize imaging labels, the research team adopted a multimodal learning strategy.

The model was trained in three stages:

Step 1: Imaging Pretraining

The model first learned key radiologic features of renal tumors, including:

- Tumor morphology

- Enhancement patterns

- Anatomical complexity

Step 2: Text Pretraining

The model was then trained to interpret clinical semantics within radiology reports, improving its understanding of medical language.

Step 3: Cross-Modal Alignment

Finally, imaging and textual information were mapped into a shared semantic space, allowing the model to align the visual information seen on scans with the descriptions provided in radiology reports.

In simple terms, the system learns to link what is seen on imaging with how physicians describe those findings, enabling the model not only to classify tumors but also to develop a deeper understanding of renal lesions.

Superior Performance Across Multiple Clinical Tasks

The RenalCLIP model demonstrated superior performance across several clinically relevant tasks, including:

- R.E.N.A.L. nephrometry scoring

- Benign–malignant classification

- Tumor aggressiveness prediction

- Prognostic risk stratification

Compared with traditional convolutional neural network (CNN) models and internationally recognized pretrained models such as:

- Merlin (Stanford University)

- CT-FM (Stanford University & University of California, San Francisco)

RenalCLIP consistently delivered better predictive performance.

Key Results

In external multicenter validation:

- AUC for benign vs malignant diagnosis: 0.841

- CNN: 0.717

- CT-FM: 0.739

The model also demonstrated more stable generalization ability in predicting tumor aggressiveness and clinical outcomes.

In the TCIA dataset, the model achieved:

- C-index for recurrence-free survival prediction: 0.726

This represented an improvement of approximately 22.6% compared with the best reference model.

From Imaging Analysis to Clinical Decision Support

According to Prof. Jianming Guo, RenalCLIP not only addresses the long-standing problem of poor generalizability in existing AI systems, but also enables end-to-end precision support from imaging analysis to clinical decision-making.

The model has the potential to become a powerful tool for personalized treatment planning in renal cancer.

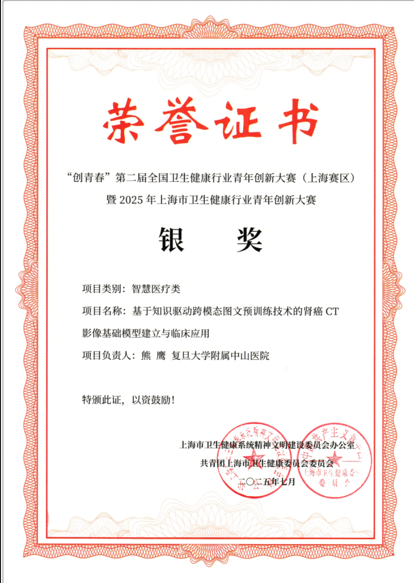

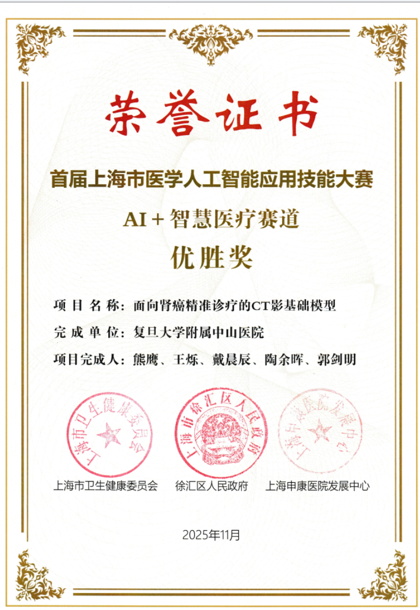

The project has already received several awards, including:

- Silver Prize in the Shanghai Health Industry Youth Innovation Competition

- Excellence Award in the First Shanghai Medical AI Application Competition

Its selection for presentation in the EAU Lab session represents strong international recognition from the global urology community.

The research manuscript is currently undergoing revision at Nature Communications.

Prof. Jianming Guo

Prof. Shuo Wang

Dr. Ying Xiong